2025-11-03

Continuous Autoregressive Language Models

(Chenze Shao, Darren Li, Fandong Meng, Jie Zhou)

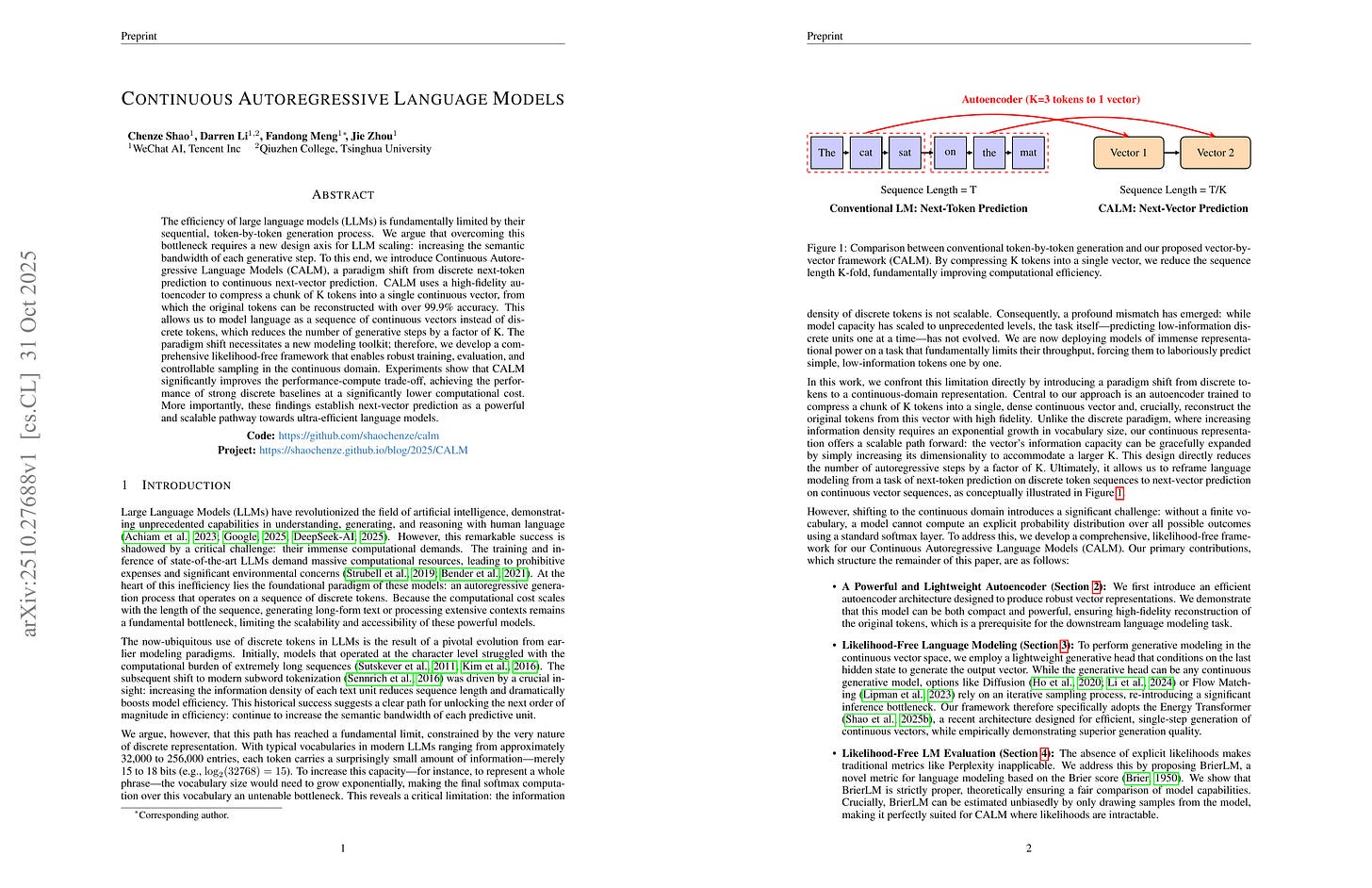

The efficiency of large language models (LLMs) is fundamentally limited by their sequential, token-by-token generation process. We argue that overcoming this bottleneck requires a new design axis for LLM scaling: increasing the semantic bandwidth of each generative step. To this end, we introduce Continuous Autoregressive Language Models (CALM), a paradigm shift from discrete next-token prediction to continuous next-vector prediction. CALM uses a high-fidelity autoencoder to compress a chunk of K tokens into a single continuous vector, from which the original tokens can be reconstructed with over 99.9% accuracy. This allows us to model language as a sequence of continuous vectors instead of discrete tokens, which reduces the number of generative steps by a factor of K. The paradigm shift necessitates a new modeling toolkit; therefore, we develop a comprehensive likelihood-free framework that enables robust training, evaluation, and controllable sampling in the continuous domain. Experiments show that CALM significantly improves the performance-compute trade-off, achieving the performance of strong discrete baselines at a significantly lower computational cost. More importantly, these findings establish next-vector prediction as a powerful and scalable pathway towards ultra-efficient language models. Code: https://github.com/shaochenze/calm. Project: https://shaochenze.github.io/blog/2025/CALM.

K개 토큰을 압축하는 오토인코더의 Latent를 Autoregression으로 예측하는 모델. Flow matching 같은 좀 더 흔한 방법 대신 Energy Loss를 사용. 이미지 같은 일반적인 Continuous Autoregression에도 효과적인 방법일지?

Autoregressive prediction of latents from an autoencoder which compresses K tokens. They used energy loss for prediction instead of more popular ones like flow matching. Would this be effective for general continuous autoregression? (e.g., images).

#llm #autoregressive-model

ThinkMorph: Emergent Properties in Multimodal Interleaved Chain-of-Thought Reasoning

(Jiawei Gu, Yunzhuo Hao, Huichen Will Wang, Linjie Li, Michael Qizhe Shieh, Yejin Choi, Ranjay Krishna, Yu Cheng)

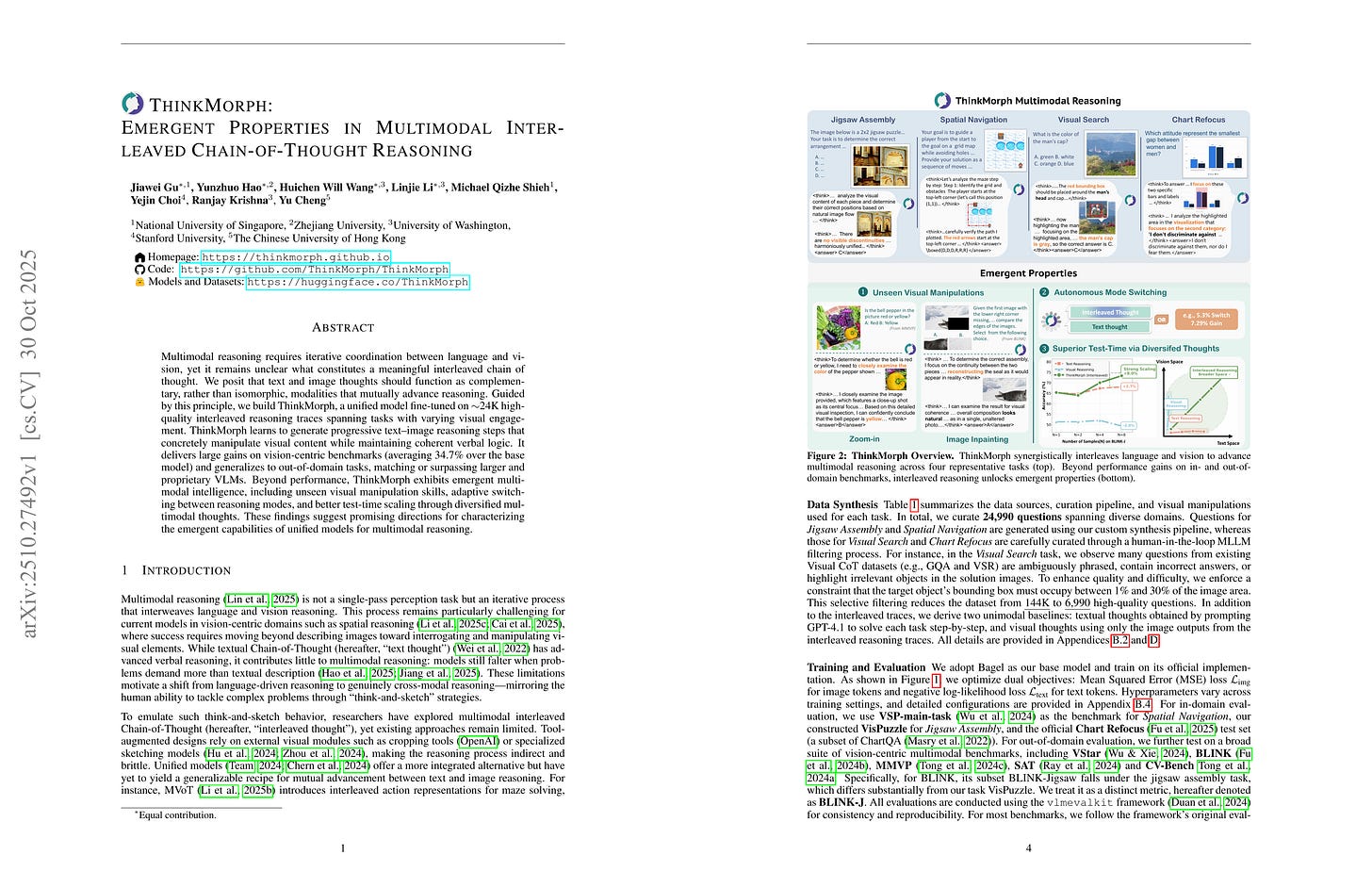

Multimodal reasoning requires iterative coordination between language and vision, yet it remains unclear what constitutes a meaningful interleaved chain of thought. We posit that text and image thoughts should function as complementary, rather than isomorphic, modalities that mutually advance reasoning. Guided by this principle, we build ThinkMorph, a unified model fine-tuned on 24K high-quality interleaved reasoning traces spanning tasks with varying visual engagement. ThinkMorph learns to generate progressive text-image reasoning steps that concretely manipulate visual content while maintaining coherent verbal logic. It delivers large gains on vision-centric benchmarks (averaging 34.7% over the base model) and generalizes to out-of-domain tasks, matching or surpassing larger and proprietary VLMs. Beyond performance, ThinkMorph exhibits emergent multimodal intelligence, including unseen visual manipulation skills, adaptive switching between reasoning modes, and better test-time scaling through diversified multimodal thoughts.These findings suggest promising directions for characterizing the emergent capabilities of unified models for multimodal reasoning.

이미지 생성을 통한 Interleaved Reasoning. 학습을 통해 확대 같은 행동이 나타났다고 함. 더 나은 프리트레이닝을 통해 어쩌면 이미지 추론이 풀리기 시작할지도.

Interleaved reasoning with image generation. Zoom-in is able to emerge from the training. Interesting. With better pretraining maybe visual reasoning can start to be solved.

#reasoning #image-generation